But for this, the network shall transform into one big linear transformation, and it will not be possible to model non-linear relationships with such a network. This is needed to introduce non-linearity in the network structure to enable to network to learn non-linear relationships.

We need to add some form of activation to the y y y vector by adding an activation layer. Note that in the above figure, although the output is represented as a sone node, it only means that the output vector can be of any dimensionality, the same or different from the dimensionality of the input vector.Īlso, using the linear layer to construct deep neural networks is needed more than using the layer to transform the input vector. The linear layer is also called the fully connected layer or the dense layer, as each node (also known as a neuron) applies a linear transformation to the input vector through a weights matrix.Īs a result, all possible connection layer-to-layer connections are present hence, every input of the input vector influences every output of the output vector. Where x is the input vector and W is the weight matrix multiplied by it, b is an optional bias term that is added to the result of this matrix multiplication to yield the linearly transformed vector y, and hence the name linear layer.ĭiagrammatically, the linear layer can be represented as the following :

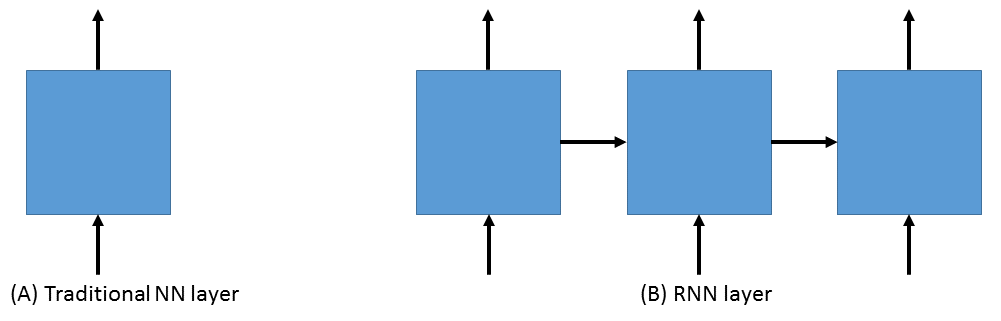

The layer does this by making use of a weight matrix and optional bias term and brings about a linear transformation of the form : The most basic of all layers used in deep neural networks is the linear layer that takes in an input vector of any dimensionality and transforms it into another vector of potentially different dimensionality. Working knowledge of Python is assumed.Working knowledge of matrix and matrix operations like matrix multiplications, vectors, and so on.To get the best out of this article, the reader should be familiar with basic linear algebra concepts like : To this end, this article talks about the foundational layers that form the backbone of most deep neural architecture to learn complex real-world non-linear relationships present in the input data while also implementing a working example of each in PyTorch like PyTorch linear layers and PyTorch embedding layer. The different layer in deep neural architectures allows the networks to learn features and hence explore patterns from the data that are then generalized to real-world data to build AI-powered applications. Deep neural networks are a term given to a wide variety of machine learning modeling architectures that contain many layers of potentially different types and are capable of learning representative features from the data rather than requiring hand-created features to learn the relationship between the input and the output data.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed